Customer service in delivery and returns is changing shape. People still want empathy, but what they ask for is simpler: the latest confirmed update, what happens next, and what they can do.

As service entry shifts into conversational assistants and third-party interfaces, the winning pattern becomes service that stays grounded in operational truth and escalates complex cases with context.

If you want the full 2026 blueprint across retail shifts, capabilities, constraints, and what to do next, explore The new retail reality: Trust, proof, and the delivery experience in the AI era.

Quick links

- Why “truth beats tone” in delivery service

- The four requirements for service that scales

- The hallucination firewall

- What this looks like in delivery and returns

- Where nShift fits

- Get the full picture

- Frequently asked questions

Why “truth beats tone” in delivery service

Most post-purchase contacts are requests for certainty. Customers want:

- the most recent confirmed update

- what happens next

- what they can do

This is why conversational AI is moving beyond FAQs into bounded work like checking an order, interpreting a delay, initiating a return, and confirming refund status.

At the same time, customers increasingly expect to change deliveries mid-journey, such as redirecting to a pickup point, adjusting a delivery window, or confirming safe drop preferences. That requires authenticated, real-time change flows from carriers, and for those changes to propagate into the same tracking story customers see.

For more insights, including external references, explore /retail-ecommerce-delivery-strategy-2026.

The four requirements for service that scales

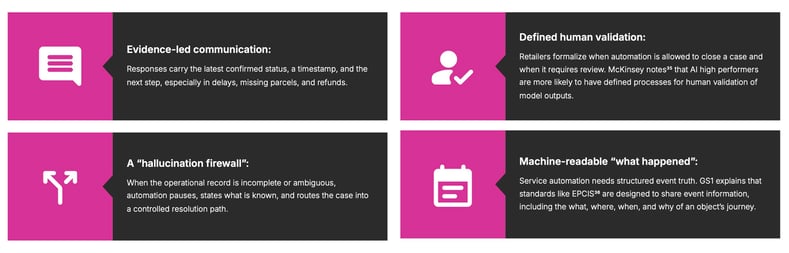

In our recent report, we outline four “what must be true” requirements for service automation that scales without losing the human moment.

What must be true for service that scales without losing the human moment

1) Evidence-led communication

Responses should carry the latest confirmed status, a timestamp, and the next step, especially in delays, missing parcels, and refunds. This reduces repeat contacts because customers can see progress and expected resolution steps.

Practical rule: if the system cannot cite a confirmed event and time, it should avoid confident claims.

2) Defined human validation

Retailers need clear rules for when automation can close a case and when it must escalate for review. The report cites McKinsey’s observation that AI high performers are more likely to have defined processes for human validation of model outputs.

Practical rule: money-impacting decisions should have designed review paths until evidence and controls are strong.

For the broader foundation behind this, read: Automation that earns trust in the AI era

3) Machine-readable “what happened”

Service automation needs structured event truth. The report references GS1 and EPCIS as standards designed to share event information, including the what, where, when, and why of an object’s journey.

This matters because assistants can only resolve delivery and returns issues when “what happened” is consistent across carriers and markets.

Deep dive: GS1 EPCIS and the future of real-time delivery visibility in 2026

4) The hallucination firewall

When the operational record is incomplete or ambiguous, automation should pause, state what is known, and route the case into a controlled resolution path.

This is the most important design pattern for protecting trust at scale. A polite answer that is wrong creates more contacts, more goodwill spend, and higher dispute cost than a factual answer that escalates cleanly.

The hallucination firewall (definition + example)

Hallucination firewall: a service safeguard that prevents automation from making unsupported claims. When evidence is missing or conflicting, the system responds with confirmed facts only, and escalates with context.

Example: “Where is my refund?”

- If the system has a confirmed return drop-off event and timestamp, it can answer confidently and set expectations.

- If the system lacks proof of drop-off, it should say that clearly and guide the customer to the next step, including escalation if needed.

This approach protects both the customer experience and internal cost-to-serve.

What this looks like in delivery and returns

As conversational service becomes more common, the goal is not to automate everything. The report describes the direction of travel as constrained autonomy: define which case types are safe for end-to-end automation and which should always route through human judgement.

In delivery and returns, customers increasingly ask “based on what?” when given a status, eligibility decision, or refund timeline. That expectation rewards systems that map answers to observable events and stated policies.

If you want the full capability map and “what to do next,” explore /retail-ecommerce-delivery-strategy-2026.

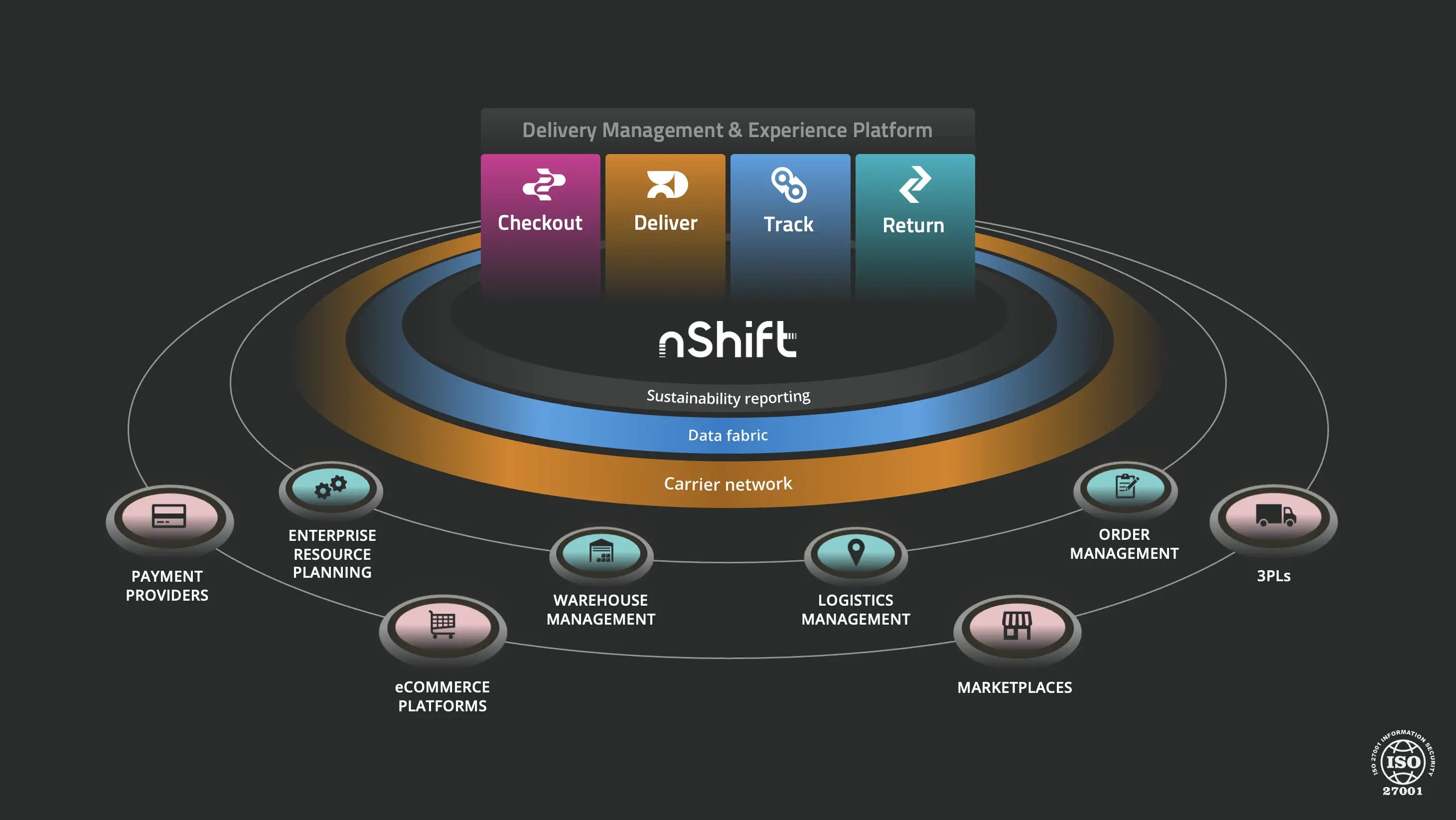

Where nShift fits

nShift's delivery management platform supports post-purchase journeys where customers look for certainty, including tracking and returns. That view is why we focus on service automation that can reference confirmed events, handle routine questions cleanly, and escalate with full context when judgement is required.

In practical terms, this depends on one coherent post-purchase story across carriers and channels, so customers and support teams work from the same operational truth.

The takeaway

In 2026, scalable service depends less on tone and more on stability. Confirm what is known. Make uncertainty visible. Resolve the case when the record supports it. Escalate when judgement is required.

Get the full picture

This article is part of our research on “The new retail reality: Trust, proof, and the delivery experience in the AI era”, which covers what’s changing in retail delivery, the shifts in customer expectations, and what to do to make your delivery strategy hold up at scale.

This article is part of our research on “The new retail reality: Trust, proof, and the delivery experience in the AI era”, which covers what’s changing in retail delivery, the shifts in customer expectations, and what to do to make your delivery strategy hold up at scale.

For the complete picture, download the full report: The new retail reality 2026.

Frequently asked questions

What is “constrained autonomy” in customer service?

A service design approach where leaders define which case types are safe for end-to-end automation and which require human judgement and escalation paths.

How do you prevent AI hallucinations in delivery support?

What should an AI assistant include in delivery and returns responses?

The latest confirmed status, a timestamp, and the next step, especially for delays, missing parcels, and refunds.

Why does “machine-readable what happened” matter?

Because assistants can only resolve issues when “what happened” is consistent across carriers and markets. Standards like EPCIS help structure that event truth.

Will third-party assistants replace customer service channels?

The report cites Gartner’s prediction that by 2028, 70% of customer service journeys may begin and end in third-party conversational assistants built into mobile devices. This increases the value of consistent post-purchase truth that works across channels.

About the author

Thomas Bailey

Thomas plays a key role in shaping how new features and platform improvements deliver real value to customers. With a background spanning product, tech, and go-to-market strategy, he brings a pragmatic view of what innovation looks like in practice and how to make delivery experiences work harder for your business.